For instance, imagine you are lifting a juice box from your breakfast table. When your internal model of the carton matches the actual object (for instance if the contents of the box are visible), you know the correct way to interact with the object, resulting in applying the appropriate force. However, when there is a mismatch between the juice box and your expectation, you immediately notice the disagreement between the world and your internal model. This happens for instance when you lift an empty box which you assume to be full, resulting in erroneous predictions and a lifting motion that does not go according to plan.

Internal models are commonly used in robots and computers to help them plan their movements, respond rapidly to new situations and predict future events. Traditionally, these internal models are designed and implemented by human engineers. Hand-designed internal models are of limited use in dynamic environments, however: Changes to an agent or its surroundings may make the pre-specified model(s) invalid. Hand designing internal models also suffers from the limitation that the models become extremely complex and difficult to specify as agents and their environment become more complex. In EPEC, we have therefore studied techniques to allow automatic generation of internal models, e.g. by use of machine learning and evolutionary algorithms.

Multiple Internal Models

Humans and animals display a remarkable ability to maintain and utilize multiple internal models, allowing us to plan and act appropriately in a wide variety of different scenarios. For instance, different models of different people allows us to adapt our social interactions to different communication partners, and due to having a different internal model of apples and tennis balls, I know I can eat the former and bounce the latter off the wall. Think about the stunning variety of real-world objects you can accurately imagine and simulate mentally. And remarkably, you can do so with a very low degree of interference - even though tennis balls and oranges are quite similar, you would never bring an orange to a tennis match.

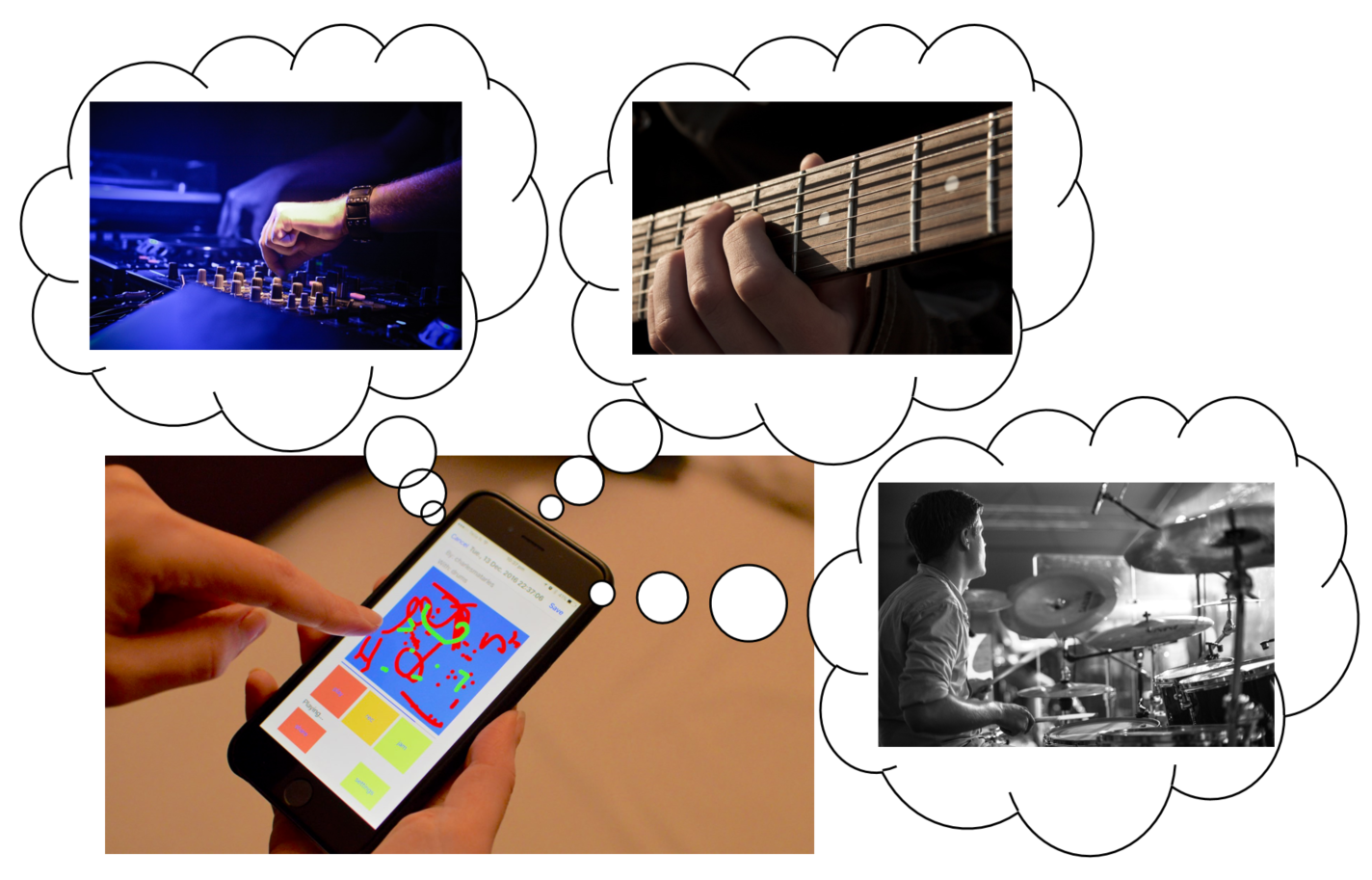

With regards to the EPEC application areas, robotics and interactive music systems, we envision several situations where multiple internal models will be beneficial. In robotics, multiple models are useful when interacting with a complex environment with many different human users, objects and other robots. A robot may even benefit from having multiple models of itself - for instance, a robot which can reconfigure its morphology can reach different areas depending on how it is configured. In interactive music, multiple internal models can aid in adapting a digital instrument to different users. Such adaptation could range from adjusting the instrument's complexity to the users' degree of competence to giving users the ability to play with an ensemble consisting of models (simulations) of other users.

It has been suggested that our ability to maintain a large variety of internal models without interference is facilitated by the modular organization of our brain. Computational models inspired by this have shown the ability to generate multiple internal models by separating knowledge into several modules, and enforcing a competition between those modules during training. We explored whether such modularly separated internal models can self-organize, by applying recent insights from evolving neural networks. Our results, published at the 2017 European Conference on Aritificial Life, offered new insights into the ability of evolved modularity in neural networks to allow such networks to maintain multiple internal models.

Transferring Skills in Model-based Reinforcement Learning

Reinforcement Learning problems are characterized by agents learning to perform a task through infrequent rewards. A typical example of a reinforcement learning problem is game playing. For instance, one could learn to play a game such as chess, by getting a reward for a positive achievement (e.g. eliminating one of the opponent's pieces), and a penalty for a negative one (e.g. losing one of your own pieces). By learning what actions, in which situations, lead to rewards and penalties, one could learn a good chess-playing strategy. Robotics is another area where many problems can be considered reinforcement learning tasks: We don't always know exactly how a robot should move to reach some goal, but we can recognize if it did something useful or something potentially dangerous, and give rewards and penalties accordingly.

In recent years, deep learning has revolutionized Reinforcement Learning, leading to breakthroughs in many different areas, such as playing board games, controlling robots and playing complex computer games.

Much of the progress in recent years has been from the area of model-free Reinforcement Learning, that is, the idea of trying to learn to solve a problem without forming an explicit internal model of the environment. Model-based RL attempts to solve two key challenges with model-free approaches: 1) They require enormous amounts of training data, and 2) there is no straightforward way to transfer a learned policy to a new task in the same environment. To do this, model-based RL takes the approach of first learning a predictive model of the environment, before using this model to make a plan that solves the problem. Despite these advantages of model-based algorithms, model-free RL has so far been most successful for complex environments. A key reason for this is that model-based RL is likely to produce very bad policies if the learned predictive model is imperfect, which it will be for most complex environments.

A way to alleviate this problem, making predictive models more feasible to learn, and thus more useful, is to keep the predictive model as small as possible, trying to learn only exactly what is necessary to solve a problem. For instance, if you are trying to model a car-driving scenario, it is not important to perfectly model the flight of birds you see in the distance or the exact colour of the sky. To drive safely and efficiently, we need to model a few key effects, such as the effect of our actions (speed/brake/turn) on the distance to pedestrians, on the speed of the car, and so on. This insight is the idea behind a recent technique called "Direct Future Prediction (DFP)", which solves RL problems by learning to predict how possible actions affect the most important metrics.

As mentioned above, one of the potential benefits of model-based RL is that learned models could be transferred to new tasks, allowing solving new problems without starting learning from scratch. In a recent paper, we tested if the DFP-models can be transferred to new tasks by having the agent learn new goals. As an example, imagine having learned a predictive model of traffic. If your goal is to drive as fast as possible (for instance if you're driving an ambulance) you will act differently than if your goal is to drive as safely as possible, but you can use the same underlying internal model. In a recent paper, we demonstrated that such goal adaptation has the potential to speed up learning on new tasks by transferring learned internal models.

Following the original DFP-paper, we demonstrated the technique on the game Viz-Doom. In this game, the agent learns to eliminate alien monsters while gathering resources (health and ammunition) it needs to stay alive. We demonstrated that a strategy learned in one game scenario can transfer to a different, much more difficult one, simply by learning new goals. In the difficult scenario below, the agent uses the internal model from the original DFP-paper, but with new goals, which are less aggressive and more suitable in this more challenging setup. Specifically, the agent focuses more on gathering resources and less on attacking due to having less health and ammunition.

In ongoing and future work, we are looking into how such skill transfer via goal adaptation can be applied in real-world tasks, including in robotic applications.

.png)